R&D Experiments

How to Build a Conversational Chatbot and Avoid If/Else Statements

ElifTech’s Cool Projects Department (CPD) is working at full tilt. During the previous experiment, we built a simple intent-based AI chatbot. We used the cognitive service, Microsoft (LUIS), and made our chatbot more human-like by using TTS (text-to-speech) and STT (speech-to-text) synthesis from the Say.js library. This time, we challenged ourselves to create a bot that would understand and manage the context of the conversation. Since the bot “state management” and if/else conditions are things of the past, we looked for a perfect, up-to-date tool to fit our needs.

Conversational Chatbot Development: How to Build Chatbots without If/Else Statements?

The silver bullet we found is Rasa, an open-source service that changed our view of bot building completely. In our new experiment, we build an action chatbot that understands intents, fulfills actions, and manages the dialog independently.

Insight into Rasa’s Approach

Rasa is a machine-learning framework for building conversational software. To make our chatbot understand intents, we used Rasa NLU, a natural language processing tool for classifying intents and extracting entities. Rasa Core is the most suitable engine for dialog flow management. Instead of using if/else statements, it builds chatbot logic with machine learning based on example conversations.

Rasa uses interactive learning, meaning every step of the intelligent bot is followed by feedback (we talk about this in the Interactive Learning section). A dozen or two short conversations are usually enough to make a solid first version of the chatbot software. Let’s take a closer look at it.

The old-fashioned approach to building an AI chatbot is writing state data with a bunch of if/else conditions. For instance, let’s assume we’re building a bot for booking flight tickets.

Here’s how the logic works:

- You ask a chatbot AI to book a flight to London.

- The bot asks you to specify the date.

- You specify the day.

- The flight is booked.

That’s the ideal scenario. But, things rarely run smoothly. Things can go downhill fast:

- You ask a chatbot to book a flight to London.

- The bot asks you to specify the date.

- You choose the date.

- You find out you have to visit London on your way to Rome.

- The chatbot doesn’t know what to do...

Darn it! Now you have to add another condition. And another one. And another one. You’ll constantly need to add more conditions to keep making your bot more advanced.

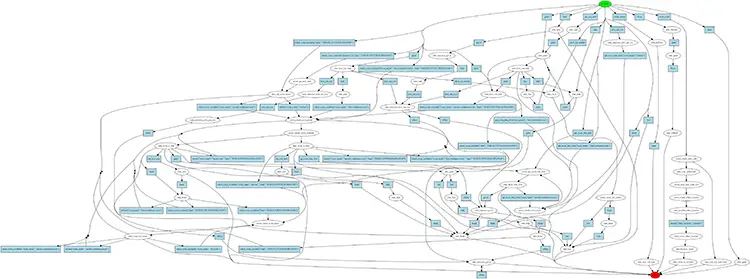

Rasa has a fundamentally different approach. Instead of writing state conditions to manage the dialog flow, they train the bot through lots of stories with variables. The graph below illustrates the variations on “get information about available rooms” and casual conversation skills. Imagine using if/else statements for this task!

BTW, you can extract the graph from Rasa Core using a simple command:

python -m rasa_core.visualize -d domain.yml -s data/stories -o graph.png

The Brain and Body of Our Bot

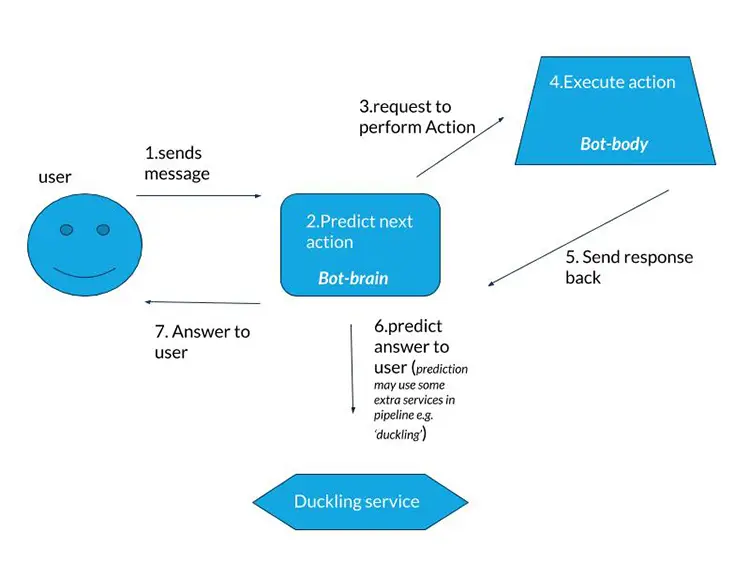

To begin, we used a Starter pack from Rasa. The Rasa Stack server built on Python is the brain of our artificial intelligence chatbot. The NLU and dialog-trained models are located here. As for the bot’s body, we used a separate server built on Node.js. It fulfills certain actions and returns the results to the brain (e.g., makes API calls or generates an answer for the user).

The brain listens to the input and extracts NLU data from the utterance. Then, the dialog model uses the data to decide on the next action: give an answer to the user, make the API call, listen, etc. Next, the bot-brain makes an HTTP request, to the Action server (the bot-body) to perform the action. When the brain receives the answer from the Action server, it predicts the next step.

NOTE 1: We are talking about Rasa Core version 0.11. In the previous version 0.10, the scheme was backward: the bot-body listened to the input, sent the data to the bot brain, and received the next predicted action to perform. Once the bot-body performed the action, it notified the bot-brain about it.

NOTE 2: You can use an action server on Python instead of Node.js inside Rasa Core.

Digging into the Bot-Brain

Domain Content

The Rasa Core documentation states: The Domain defines the universe in which your bot operates. It specifies the intents, entities, slots, actions and templates used by the chatbot.

Here’s our domain.yaml:

intents:

# add your intents

- greet

- thank

- bye

- deny

- affirm

- create_event

- remove_event

- inform

- show_my_events

- check_room_available

- are_you_sure

- get_room_free_slots

- None

- help

entities:

- event_name

- room_name

slots:

event_name:

type: text

room_name:

type: text

time:

type: text

duration:

type: text

normalized_duration:

type: text

is_room_available:

type: bool

initial_value: False

is_room_exists:

type: bool

initial_value: False

rooms_free_slots:

type: list

initial_value: []

templates:

# templates the bot should respond with

utter_greet:

- "Hey, how can I help you?"

utter_thank:

- "You're welcome!"

utter_bye:

- "Goodbye"

utter_how_can_help:

- "how can i helps"

utter_on_it:

- "I am on it"

utter_ask_event_name:

- "what is event name"

utter_event_saved:

- "event saved"

utter_ask_room_name:

- "what is room name"

utter_room_is_free:

- "room is free"

utter_room_is_busy:

- "room is busy"

utter_fallback:

- "sorry, I did not get you..."

utter_room_not_exists:

- "room not exists"

utter_sure:

- "yeah pal, i'm sure"

utter_show_free_slots:

- 'the {room_name} is free/busy at (todo:make sure real bot receive free time)'

utter_help:

- 'Here is what I can do pal...'

actions:

# all the utter actions from the templates, plus any custom actions

- utter_greet

- utter_thank

- utter_bye

- utter_how_can_help

- utter_on_it

- utter_ask_event_name

- utter_event_saved

- utter_ask_room_name

- utter_room_is_free

- utter_room_is_busy

- utter_fallback

- utter_room_not_exists

- utter_sure

- utter_show_free_slots

- utter_help

- action_create_event

- action_remove_event

- action_show_my_events

- action_check_room_available

- action_check_room_exists

- action_get_room_free_slots

- action_get_new_slots

Let us explain:

- Intents include the defined intents extracted by Rasa NLU from the utterances.

- Entities include the defined entities extracted by Rasa NLU from the utterances.

- Slots are the bot memory; it stores data about the object we talk about (room name, time, etc.).

- Templates include the default templates a bot uses to answer (NOTE: We use our action server which generates bot responses. We do not use templates from domain.yaml, but there still has to be template data).

- Actions include the defined actions the bot-brain “asks” the bot-body to perform (check if the room is available, check if a user exists, etc.).

When the bot-brain receives an utterance from the user, the NLU part extracts the info from the user’s utterance. It is either Intent or Entity. If a Slot name and Entity name are the same, the Entity value automatically goes into Slot value.

Training Data for NLU

How do we get entities from the user’s utterances? To extract the NLU data, Rasa NLU uses a pipeline. Here it is:

language: "en"

pipeline:

- name: "nlp_spacy"

- name: "tokenizer_spacy"

- name: "intent_featurizer_spacy"

- name: "intent_classifier_sklearn"

- name: "ner_crf"

- name: "ner_synonyms"

- name: "ner_duckling_http"

url: "http://0.0.0.0:8000"

dimensions: ["time", "duration"]

timezone: "Europe/Kiev"

We decided to use this pipeline since we had less than 1 000 training examples. This pipeline contains Duckling, a Clojure library, that parses text into structured data. It is essential in our case, as the user talks with the bot about time and dates. Duckling has to extract the dimensions: ["time", "duration"] entities. We used ner_synonymsto extract the names of our conference rooms.

For the pipeline, we have the nlu_config.yml file.

At this stage, the Rasa NLU service isn’t ready to extract data from utterances; we need to train it with some intent examples. We’ll use ‘data/nlu_data.md’ for it.

Here’s an example of our training data for the check_room_available intent. Take a look at the synonyms.

## intent: check_room_available ## ← defined intent here

- is [second hall](room_name) free? ## list of example utterancses

- tell me if [factory](room_name) is free at 1pm

- I am curious if [space](room_name) is empty in an hour

- would you be so kind to ping me if [big hall](room_name) is free now?

- Has anyone occupied [first room](room_name) at 5 o'clock

## synonym:factory ## defined synonym for first room name

- factori

- Factori

- Factory

- Factory room

- main conference room

- first room

- first hall

- main hall

- main room

- the big one

- main one

## synonym:space ## defined synonym for second room name

- Space

- sPace

- the space

- second hall

- room 2

- second room

- small room

- small conference hall

- room two

- small one

- second one

Look at the line - is [second hall](room_name) free?: [entity value goes here](entity name goes here).

NOTE: Try to use different sentences for one entity.

For training data, we have a Makefile with

train-nlu:

python -m rasa_nlu.train -c nlu_config.yml --data data/nlu_data.md -o models --fixed_model_name nlu --project current --verbose

Type “make train-nlu” in the terminal and it starts the training process.

This is how you set up Rasa NLU data extraction in the Rasa Stack. Now, we have to set up and train Rasa Core to manage the dialog flow with intents, entities and slots.

Training Data for Dialog Management

Our goal is to train the data/stories/check_room_available.md mode, where we create example stories for the Rasa Core to learn.

We need the following functionality:

- A bot exchanges greetings with a user.

- When the user asks to check the availability of a specific room, the bot checks whether the required room exists. Then, it checks if the room is available.

- The bot says “room is free” or “room is busy.”

- If the room doesn’t exist, the bot answers “no such room.”

With the if-approach to bot building, you’ll have quite a few “ifs” in this small dialog: if a user doesn’t say “hello” and asks about the room straight away; if a user doesn’t specify the room name, etc.

Let’s write a story using the Rasa approach:

## Generated Story -1857005876918346782

* greet

- utter_greet

- utter_how_can_help

* check_room_available{"room_name": "second conference room", "time": "2018-09-13T14:00:00.000+03:00"}

- slot{"room_name": "second conference room"}

- slot{"time": "2018-09-13T14:00:00.000+03:00"}

- action_check_room_exists

- slot{"is_room_exists": true}

- action_check_room_available

- slot{"is_room_available": false}

- slot{"time": "2018-09-13T14:00:00.000+03:00"}

- utter_room_is_busy

## Generated Story 8402366997527664421

* greet

- utter_greet

- utter_how_can_help

* check_room_available{"time": "2018-09-13T13:00:00.000+03:00"}

- slot{"time": "2018-09-13T13:00:00.000+03:00"}

- action_check_room_exists

- slot{"is_room_exists": false}

- utter_room_not_exists

In the Makefile, we have:

train-core:

python -m rasa_core.train -d domain.yml -s data/stories -o models/current/dialogue --epochs 200 --nlu_threshold 0.20 --core_threshold 0.2

You probably noticed --nlu_threshold 0.20 --core_threshold 0.2.. It’s a fallback action used when you want to say “Sorry, I don’t understand” (the bot is only 20% sure). We use it when the prediction is lower than the confidence value.

In the terminal, we type “make train-core.”

Now, if we ask (without greeting) “Is the big hall free in an hour?” the bot checks if the room exists and whether it’s free. Then it gives us an answer.

You need to create dozens of stories if you want decent results, which is a rather inefficient way to make training data. Interactive learning is the solution.

Interactive Learning

With interactive learning, your bot receives feedback while you talk to it. Let’s use this approach to train our bot to manage various dialogs and cases. First, we type our initial utterance in the console. The bot shows us what it extracts from the sentence (intents and entities) and what the next predicted action is. At this point, the chatbot asks for approval: whether it’s right or not. As you provide the correct answers and the proper next action, the AI immediately retrains the dialog model. This way, you can create a lot of cases to be saved in stories.md and pasted into the dialog training data afterward.

NOTE: At the moment, the bot cannot retrain the NLU data; it can only fix dialog stories. If your chatbot makes a mistake with intent, for example, you have to look back at the NLU training at data/nlu_data.md

In the Makefile:

core-learn:

python -m rasa_core.train --online -o models/current/dialogue -d domain.yml -s data/stories -u models/current/nlu --endpoints learn_endpoints.yml

Parts of the Bot-Body: Action Server

Here’s the Node.js server with the received requests from the bot-brain, the Rasa Core server.

// perform bot actions

server.post('/webhook', botPerformAction);

// generate bot messages

server.post('/nlg', botGenerateUtter);

You can find more information on the request and response formats for actions and bot answer generation in Rasa’s official documentation.

Our Node.js bot-body server performs the following action based on the Google Calendar we use to manage office conference rooms, Google Calendar APIs, DB requests, etc. :

{

"next_action":"check_room_available"

...

}

We also generate bot responses:

{

"text": "Hey my friend! Main room is available today at 3 p.m. for 2 hours",

"buttons": [],

"image": null,

"elements": [],

"attachments": []

}

Introducing Our Bot to the World

To show our AI chatbot to the world (well, actually to our co-workers only), we used Microsoft Bot Framework. But, we plan to try Rasa Core channel services for Facebook, Slack, and more in the future. Or we can decide to build custom channels.

Currently, our chatbot can tell you which rooms are available and when. In the future, we plan to teach the chatbot to add, edit and remove room bookings, send notifications to people and be nice and friendly (and even use smileys ;-). We look forward to trying Memory Graph to improve the bot’s memory and test the Rasa Stack trained models together with large human dialog datasets to teach our chatbot some casual talk. As the cherry on top, we are going to reward our creation with ears and a voice with the help of the Google Home smart device.

Conversational AI is the future. It’s rocking eLearning, healthcare, insurance, banking, telecom and other industries. If your company has decided it needs a conversational chatbot, contact ElifTech. We’ll be glad to help you out!

Read more: How AI helps to create a “human face” for modern Fintech